How to Build a Foresight Process Your Leadership Team Will Actually Use

Most organisations scan the environment. Almost none connect what they find to decisions that matter.

It happens all the time. A leadership team gathers at an offsite. Somebody presents a slide on AI. There is a discussion about regulatory risk. Then we go back to business as usual the next day.

The strategy acknowledges the external forces, but the budget allocates against internal priorities. The gap between what the team knows is changing and what the team actually does about it grows wider each quarter.

By the end of this article, you should be able to answer three questions:

What are the three structural forces your strategy is organised around?

When did your leadership team last change a resource allocation decision because of a shift in one of those forces?

Who is responsible for monitoring those forces?

If those questions feel uncomfortable, the process described here is designed to close exactly that gap.

The gap is visible empirically. My research across 8,430 companies found it in the data. Every firm I analysed discussed external forces to some degree. HP mentioned technology, Nokia referenced consumer devices, and Amgen wrote about globalisation. But they did not shape those firms’ decisions. The superfirms paid disproportionate attention to growth-oriented, outward-facing forces. Their competitors treated the same forces as background noise. The difference lay in whether foresight had been connected to the decisions that allocate capital, talent, and organisational energy.

Closing that gap requires a process, and the annual offsite with a guest speaker on trends does not qualify. Neither does the consulting engagement that produces a 100-page report nobody reads. What works is something less glamorous: a process that runs continuously and feeds directly into the decisions that shape the firm’s direction.

Why the strategy offsite fails

The output of a strategy review is typically a set of slides: observations that are broadly accurate and almost entirely disconnected from the capital allocation decisions that will be made in the following months.

This fails for a specific reason. External forces do not change on an annual cycle. A force that was emerging in 2022 may be accelerating by 2026. Nokia’s leadership reviewed its competitive environment every year throughout the 2000s. The reviews noted the emergence of smartphone computing. But Nokia held over 40% of global handset market share in 2007, and that dominance made the annual cadence feel adequate. Then Apple had launched the iPhone and captured the position that Nokia would never recover. The annual cycle failed not because Nokia’s analysts were uninformed but because they did very little about it.

Cisco, by contrast, is one of the superfirms in my research, and its approach is completely different. John Chambers described his competitive logic in terms of “market transitions,” not competitors, and Cisco’s capital allocation reflected this: the company made over 200 acquisitions in two decades, each tested against whether it strengthened Cisco’s position on the forces reshaping networking and communications technology. Cisco did not know more about market forces than Nokia did. It acted on what it knew faster, feeding foresight directly into acquisition decisions on a continuous basis rather than reviewing it annually and filing it.

Who should be in the room

The composition of the foresight group matters more than most organisations recognise. The default is to assign foresight to the strategy team, which produces rigorous analysis that the operating executives have no ownership of and therefore ignore. The opposite failure is to make it a CEO-only exercise, which produces conviction at the top and bewilderment everywhere else.

In my experience, the groups that work best are small, senior, and cross-functional: six to eight people. The CEO or equivalent, the heads of the two or three largest business units, the CFO (because foresight without a connection to capital allocation is academic), and one or two people from outside the core leadership who bring a different perspective: a technology leader, a head of corporate development, or someone with deep customer contact. The cross-functional composition matters because forces look different from different positions in the organisation. A demographic shift that the head of product sees as a design challenge looks like a revenue risk from the CFO’s and an acquisition opportunity from the perspective of corporate development. Those three perspectives on the same force are what make the scanning exercise strategically useful rather than analytically interesting. Larger groups default to presentation mode. Smaller ones lack the diversity of perspective that makes the scanning productive.

One person who should not be in the room is an external consultant running the process. The foresight group needs to own its conclusions. When an outside firm presents the analysis and the leadership team reacts to it, something shifts: the discussion becomes an evaluation of the consultant’s work instead of a debate about the firm’s strategic direction. I have watched this happen repeatedly. The leadership team engages with the slides, not the forces. They offer polite feedback instead of genuine disagreement, and leave the room having discussed someone else’s view of their environment. External input is valuable for specific questions, but the scanning and prioritisation process must be owned internally or it will not survive the first quarter in which operational pressures compete for the leadership team’s time.

What the process produces

The output of a foresight process is a prioritised view of the external forces reshaping the firm’s competitive environment, updated regularly and connected to specific decisions.

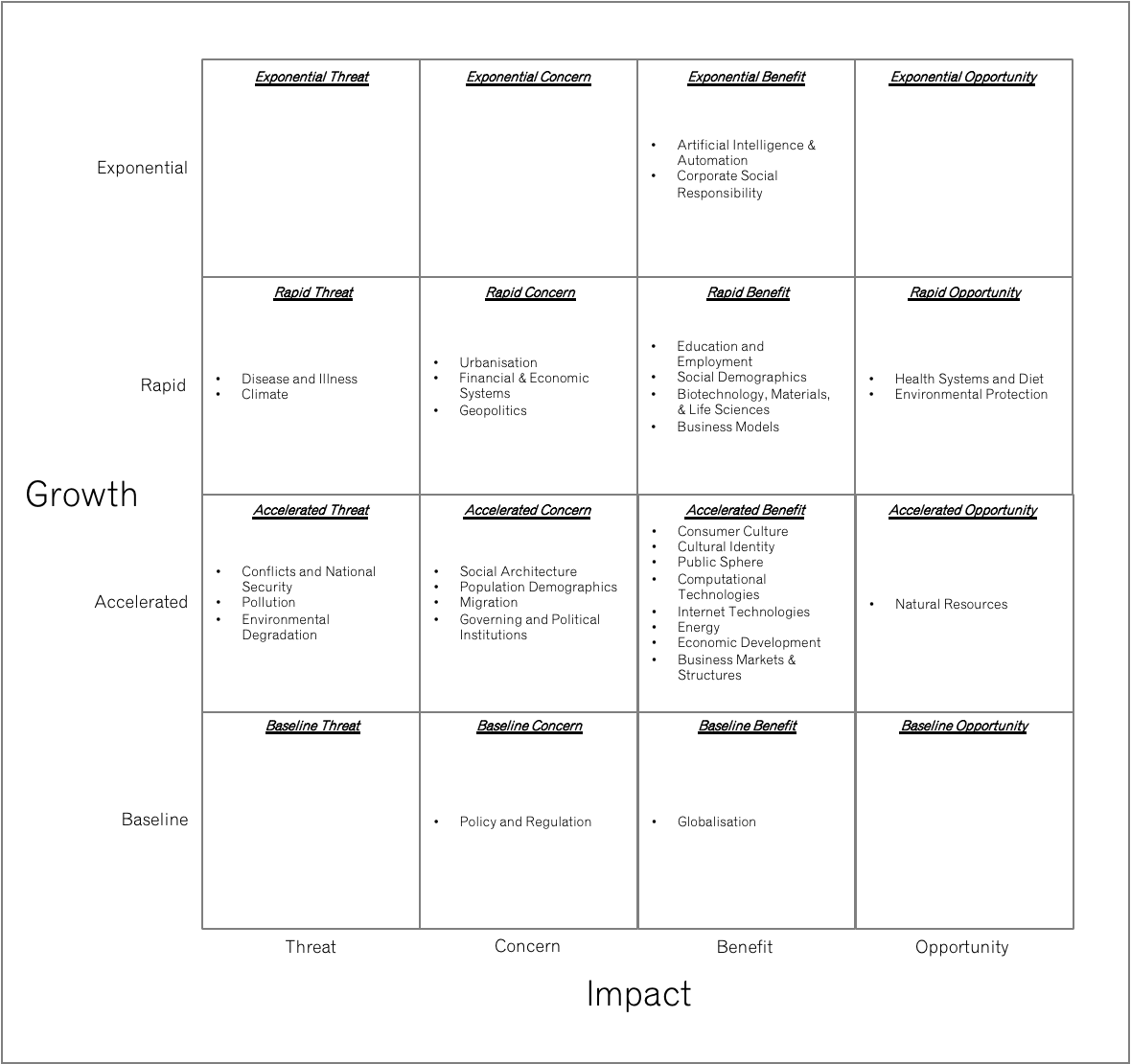

I developed the Growth-Impact Matrix to help with this:

The horizontal axis plots impact: whether the force represents an opportunity or a threat to the firm, assessed through a combination of revenue exposure, competitive positioning, and capability alignment. The vertical axis plots momentum: how fast the force is growing, scored from baseline (growing at or below GDP rate) through accelerated and rapid to exponential (growing at 15% or more annually). The team arrives at a shared position for each force through structured discussion, typically with one member presenting a preliminary assessment and the group debating until consensus emerges. Voting can break deadlocks, but the conversation is where the value lies.

The resulting matrix produces four broad zones. Forces in the upper right (high momentum, positive impact) are the ones to commit to and build around. Forces in the upper left (high momentum, negative impact) demand defensive investment. Forces in the lower half deserve monitoring but not strategic commitment, and the discipline of leaving them there, rather than escalating every emerging trend into a strategic priority, is part of what makes the matrix useful.

How the matrix connects to theme selection

What makes this tool different from a standard risk matrix or PESTLE analysis is that it connects directly to the three tests that determine whether a force qualifies as a strategic theme:

Has it been growing structurally for a decade (structural shift)?

Does it affect multiple industries (cross-industry relevance)?

Can the firm allocate capital and talent against it (actionability)?

The momentum dimension captures structural shift directly. Cross-industry relevance shows up in how broadly a force’s impact reaches across the firm. And actionability is reflected in the specificity of the response the matrix demands: commit, invest, or defend are operational instructions, not vague prescriptions like “monitor” or “be aware.” The matrix is the mechanism through which theme selection happens.

The matrix serves as a living document. Forces change. A driver that sat in the monitoring zone two years ago may have accelerated into the commitment zone. The value of the matrix is that it makes these movements visible and forces the leadership team to respond to them, which prevents the common failure of pretending the strategic environment has not changed since the last review.

Building it for the first time

The initial exercise, building the matrix for the first time, takes genuine effort. The SPINE framework provides 292 individual drivers of transformation organised across five forces: Society, Power, Innovation, Nature, and Economy. A leadership team scanning this taxonomy for the first time should expect to identify 10 to 15 drivers with material relevance to their competitive context. Plotting those drivers on the Growth-Impact Matrix and arriving at a shared view of which forces demand strategic response will typically require two to three half-day sessions. After the initial build, the process shifts to maintenance and decision-making.

How often, and for how long

A quarterly review is the right cadence for most organisations. It aligns with the business planning cycle without creating the overhead of monthly meetings that compete with operational demands. Firms in fast-moving industries, those in the middle of a strategic crisis, or early-stage companies where the competitive landscape is still forming may need a higher frequency, but for established firms operating in industries that change over years rather than weeks, quarterly works well. Each session should be two to three hours, structured around three questions:

Which forces have moved on the matrix since the last review?

What new forces have appeared that were not previously on the radar

And most critically, which capital or talent decisions should change as a result?

Not every force needs rescoring each quarter; the focus should be on the three or four where momentum or impact may have shifted, with a full rescore of the entire matrix annually.

That last question is the one that separates a useful foresight process from a sophisticated monitoring exercise. If the quarterly review does not produce at least one specific recommendation about resource allocation, hiring, investment, or divestment, then the process is not connected to decisions and will eventually be abandoned. The firms that dominated their industries did not merely monitor forces. They organised around them, which means every foresight discussion ended with a decision or a reaffirmation of a previous one.

Between quarterly sessions, one member of the foresight group should own the monitoring function. This is a standing responsibility, not a full-time role: flag any significant movement in the forces on the matrix, any new force that has appeared, or any event that changes the momentum or impact assessment of an existing force. When something material changes, the group reconvenes. A well-functioning process would have triggered an interim review when the European energy crisis reshaped operating costs across the continent in 2022, and again when ChatGPT’s release in November of that year forced every firm with AI on its matrix to reassess how fast the force was moving. The cadence is quarterly by default, with ad hoc sessions when the environment moves faster than the cycle.

Where foresight processes break down

Three failures recur. Information overload is the most common. The goal of foresight is reduction, not accumulation: selecting three or four forces to build around from a landscape of hundreds. A matrix with 40 forces plotted on it serves research purposes, not strategic ones. The discipline of limiting the matrix to 10 to 15 forces, and the themes derived from them to three or four, is what gives the process strategic value.

Disconnection from capital kills the process more quietly. A foresight exercise that produces insight but never changes a budget line will be abandoned within a year. The CFO’s presence in the room exists to ensure that when the group concludes a force has accelerated, the conversation immediately turns to what that means for next quarter’s investment plan.

The deeper failure is treating foresight as a support function. If the process is owned by a strategy team that reports to the leadership rather than being run by the leadership itself, the output will be treated as advisory, not directive. My research showed this clearly: the direction of a leadership team’s attention predicted competitive outcomes years in advance. Attention that is delegated to a support function is, by definition, not the leadership team’s attention.

The test

The tests are simple.

What are the three structural forces your strategy is organised around?

When did the leadership team last change a resource allocation decision because of a shift in one of those forces?

Who in the organisation is responsible for monitoring those forces?

If the answers come easily, the process is connected to decisions. If they do not, the process is producing awareness without action, which is the gap that separates the firms that execute well but lose from the firms that dominate.